What AI Gets Wrong About Your Shop — And Why It Looks So Right

Are you using AI to evaluate your shop’s website — but not sure if you can trust what it tells you?

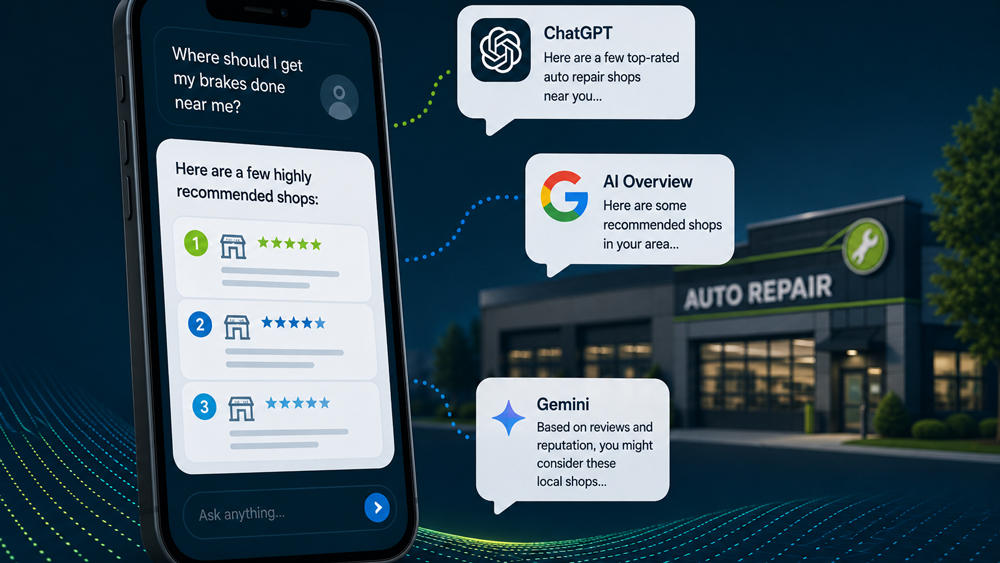

In our first post in this series, we talked about using AI tools like ChatGPT and Gemini to evaluate your auto repair shop’s website and why the output, while often useful, isn’t the same as verified data. We talked about the difference between what AI illuminates and what it actually knows.

That post resonated. A lot of you have been running prompts, sharing the output with your teams, and asking smart questions about what to trust. That’s exactly the right instinct.

Today we’re going to go a step further. I want to walk you through the specific places where AI gets it wrong, and, more importantly, why it looks so right when it does.

AI doesn't make obvious mistakes. If it told you your brake shop was actually a bakery, you'd laugh and move on. The real issue is that when AI is wrong, it's wrong in a way that looks exactly like it's right.

Same formatting. Same confidence. Same level of detail. There’s no warning label.

Here's where to look.

The URL Myth

This catches almost everyone.

You paste your website URL into ChatGPT and ask it to analyze your site. It comes back with a detailed breakdown of your messaging, your content gaps, your calls to action. Specific. Structured. It reads like the tool went through your site page by page.

But did it?

Maybe. Maybe not. Some versions of ChatGPT can browse the web. Some can’t. Free and paid have different capabilities, and those capabilities keep changing. Gemini, Perplexity, and other tools each handle URLs differently. Even when a tool can browse, it might read your homepage and skip everything else. Or pull partial content and fill in the rest from general knowledge about auto repair websites.

The output looks the same either way.

Here’s a simple test: ask the tool directly. “Did you actually access the URL I provided, or are you basing this on other information?”The answer is often more honest than the initial output.

The Demographic Data Problem

This is where AI audits create the most damage.

Ask ChatGPT or Gemini to profile the demographics around your shop’s ZIP code. You’ll get median household income, age distribution, vehicle ownership rates, educational attainment. Clean table. Your ZIP code cited. It reads like someone pulled a report from Census data.

They didn’t. And neither did the AI.

These tools don’t query the U.S. Census Bureau in real time. They don’t pull from any demographic database. They generate numbers that are plausible for the type of area they associate with your ZIP code.

We’ve checked AI-generated profiles against real Census data, across several ZIP codes and found:

- Income estimates off by tens of thousands of dollars.

- Household composition percentages that don’t match reality.

- Vehicle ownership numbers that look like regional averages, not local data.

Confident formatting and specific numbers feel like data. They’re not always data.

The information you actually need to make marketing decisions already exists. It’s in your CRM:

- Who your customers are.

- What services they’re buying.

- What your average repair order looks like.

- What your retention rate is.

That’s real data about your real customers. It will always be a better foundation than AI-generated estimates about your ZIP code.

The Competitor Comparison Problem

“Compare my shop to competitors in my area.”

This is the second most common AI request we see. And the output looks impressive. Structured side-by-side. Estimated ratings. Assumed service offerings. Suggested differentiators.

It looks like competitive intelligence.

But think about what the AI would actually need to make that comparison real:

- Real Google rankings.

- Review velocity and trajectory.

- Actual service pricing.

- Revenue, bay count, certifications, fleet contracts.

None of that is available to an AI tool. It can’t see it and can’t access it.

What you’re getting is a framework built from fragments of public information. Useful for thinking about positioning. Not useful as the basis for business decisions.

The Confidence Problem

There’s a thread running through all of this.

When AI is right, it sounds confident. When AI is wrong, it sounds exactly the same way.

No change in tone. No hedging. No asterisk. A perfectly accurate observation about your website and a completely fabricated demographic statistic are delivered with identical confidence and identical authority.

The AI doesn’t know the difference. So it can’t signal it to you.

Before I built software for auto repair shops, I built information systems for public and academic libraries. Platforms designed to help people find reliable information in environments full of unreliable information. That’s not a bad background for this moment. The tools are different. The problem is the same.

A quick framework that helps. For any claim AI makes about your business, ask yourself:

- Fact? Something I can verify.

- Inference? A reasonable conclusion, but not independently confirmed.

- Guess? No supporting evidence. Presented as a finding.

Start sorting AI output into those three buckets. You’ll never read an AI analysis the same way again.

So What Should You Do?

None of this means you should stop using AI. It means you should use it with your eyes open.

Keep using AI for what it does well. Drafting content. Brainstorming ideas. Getting a second perspective on messaging. Generating email templates and social media calendars. These are real, practical uses where AI saves you time. (We covered these in our first post.)

Verify before you act. If AI gives you numbers, check one or two against a real source before making decisions based on them. Your CRM has your actual customer data, service mix, and revenue numbers. Google Analytics has your real traffic. The Census Bureau has your actual local demographics. Five minutes of verification is the difference between a solid starting point and an expensive assumption.

Ask it to show its work. “Can you provide a source for that?” is a simple follow-up that often reveals whether a claim is based on something real or something the tool generated on its own. If it provides links, click them. They don’t always lead where you’d expect. If it can’t provide a source, that tells you how much weight to give the claim.

Ask better questions. The quality of what you get out of AI depends entirely on what you put in. Provide real data from your business. The numbers in your CRM, not estimates from memory. Be specific about what you want. And add one sentence that changes everything: “If you’re inferring or estimating anything, tell me.”

Start fresh every time. New question, new conversation. Don’t carry context from a previous chat into an evaluation. The AI will reflect your earlier input back to you, and it’ll feel like validation when it’s actually an echo chamber.

What’s Coming Next

We’re putting together a comprehensive guide that goes deeper on every topic we’ve covered in this series, and quite a bit more. It’s called

The Shop Owner’s Guide to AI in Marketing. It covers how to evaluate AI output, how to write better prompts, where AI adds real value and where it falls short, and what questions to ask the people who manage your online presence. Click the link below to Get on the List and be the first to know when this guide is released.

In the meantime, if you missed our first post, start there: AI Is a Flashlight, Not a Map.

Heather Myers is the Chief Technology Officer at KUKUI, where she builds marketing and customer engagement technology for independent auto repair shops. Before joining the automotive technology space, she built information systems for public and academic libraries.

This is the second post in our ongoing series,

AI Is a Flashlight, Not a Map. New posts publish every two weeks.